My responsibility.

I worked across strategy, scope and interaction design, translating user research and AI constraints into concrete product decisions. My focus was on defining what the product should do, why it should do it, and how users interact with it, particularly in contexts where trust, accuracy and ethical considerations are critical.

I led research and synthesis, facilitated ideation and prioritisation workshops, and shaped interaction models that balanced user needs with technical feasibility. I was also directly involved in prototyping and usability testing, ensuring that early insights were carried through to a high-fidelity, testable concept.

Confidentiality: This case study is based on work I did for an IT client and reflects my actual contributions. To respect confidentiality obligations, the product-sensitive details have been anonymised.

A product and system problem, not just a UI one.

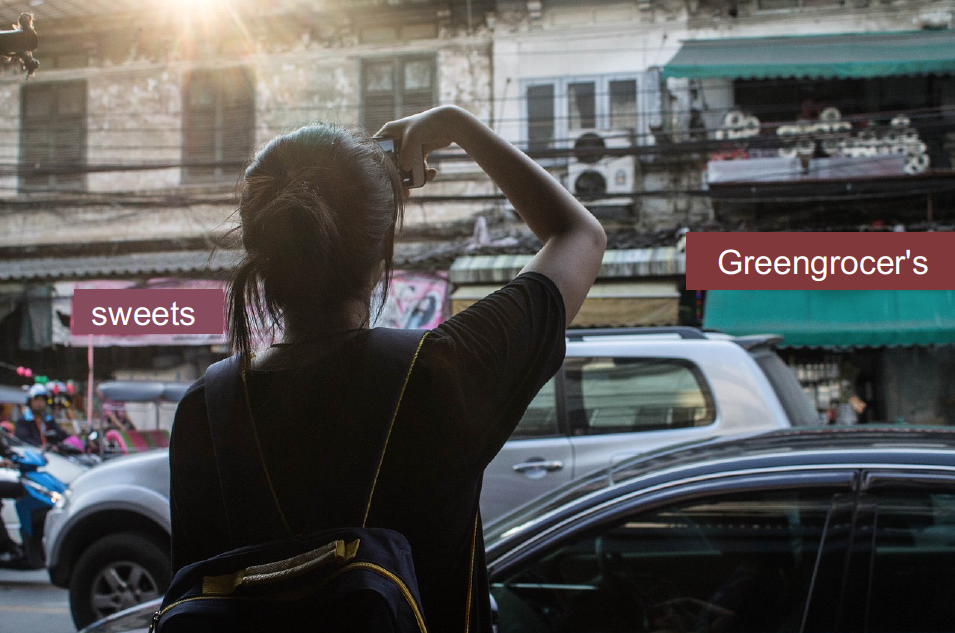

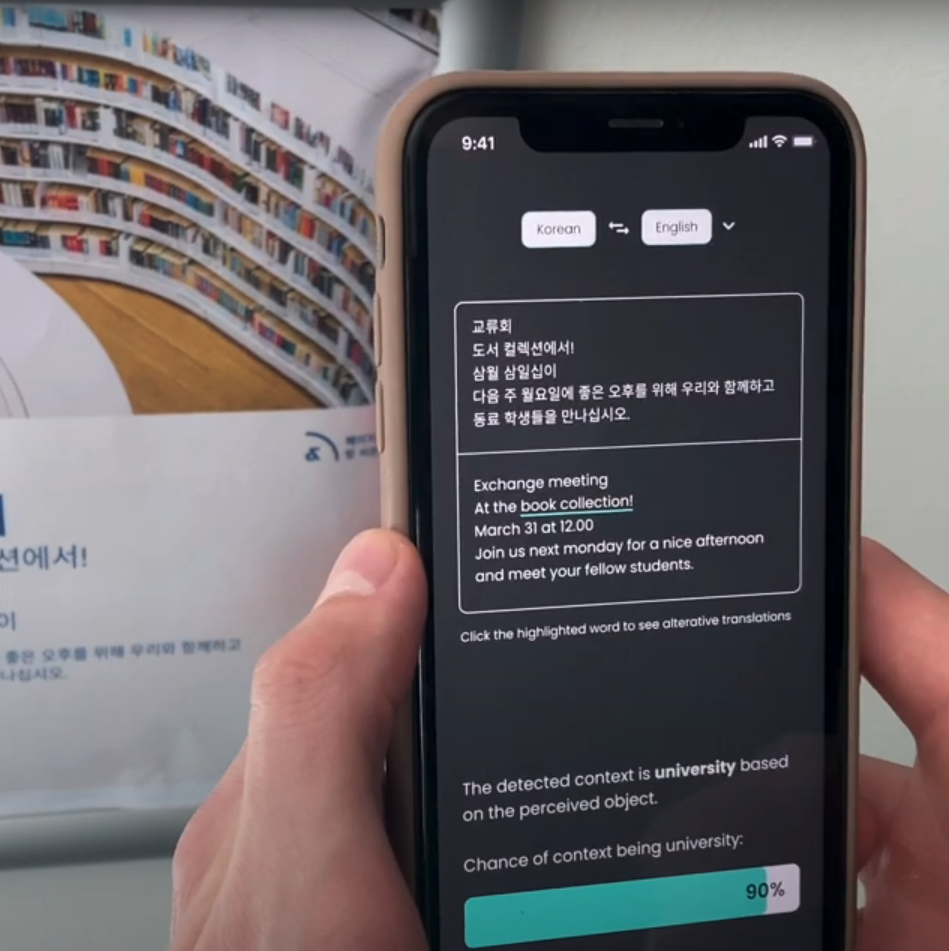

Existing camera-translation apps fail in real travel situations. Users experience low trust due to unreadable overlays, ambiguous translations and a lack of transparency — especially in official or high-stakes contexts. Rather than optimising visual polish alone, I framed the challenge as a product and system problem.

?

How might translation become context-aware, not just text-aware?

?

How do we design for trust when accuracy cannot be guaranteed?

?

How can users remain in control when AI output is uncertain?

This framing guided all downstream design decisions, from feature scope to interaction models.

From research to product decisions.

I co-designed and conducted 2 out of 7 semi-structured interviews with expats and international students, supported by market and desk research into translation tools and AI/ML capabilities. Insights were synthesised through affinity mapping, revealing three dominant needs.

01

Clarity over speed

Readable, stable translations matter more than instant results.

02

Interpretability

Users want to see alternatives when meaning is ambiguous.

03

Trust & autonomy

Users distrust black-box translations in official contexts.

Rather than treating these as usability issues, I translated them into product principles that shaped scope:

01

Expose uncertainty

The system must expose uncertainty instead of hiding it.

02

Allow intervention

Users must be able to intervene without restarting the task.

03

Acknowledge failure

Failure cases must be acknowledged and reportable.

Making ideas tangible before designing them.

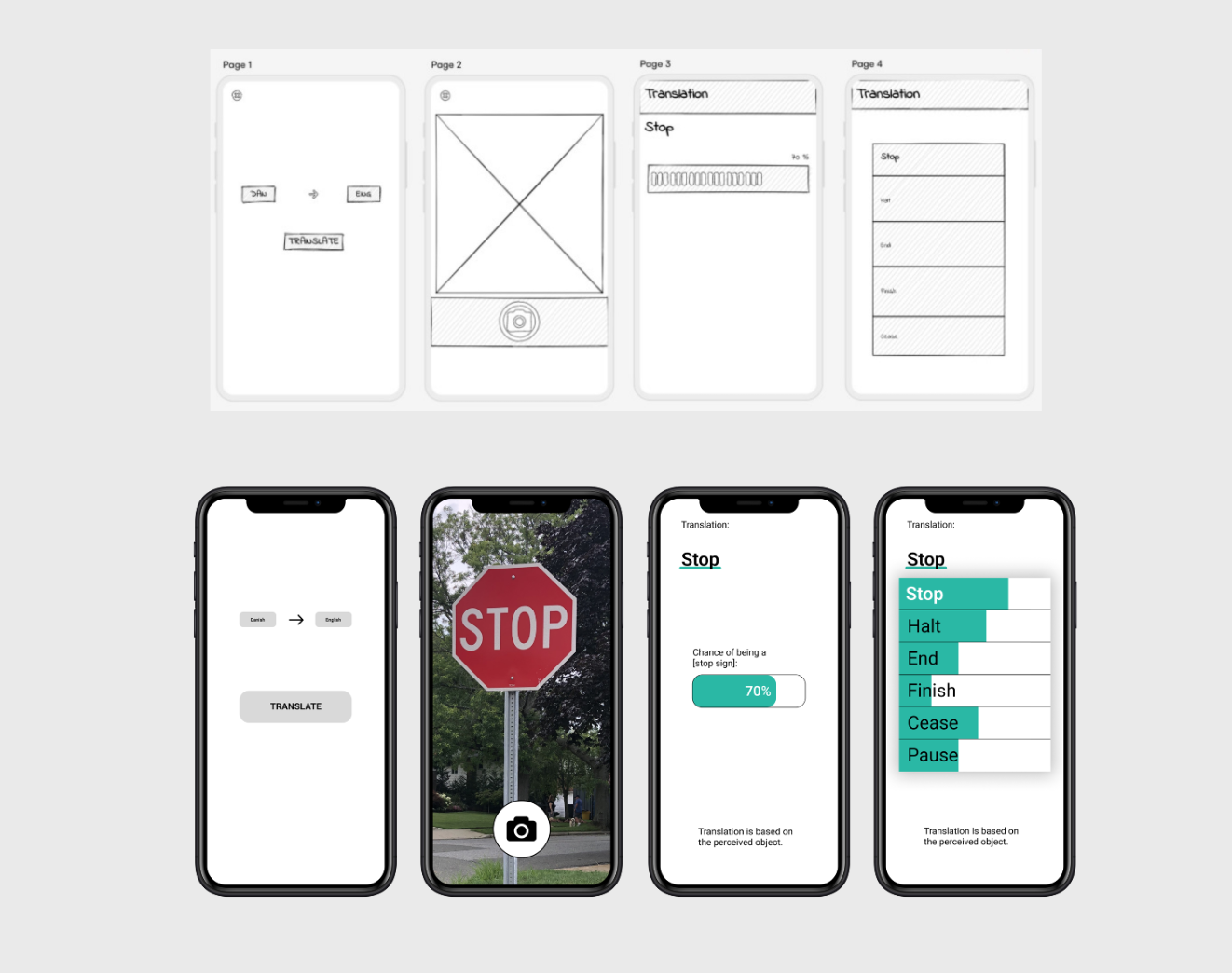

Before defining the interaction model, I led an exploratory paper-prototyping workshop to make early ideas tangible and test them through use. The focus was on learning through rapid experimentation rather than visual fidelity.

Exploratory paper-prototyping workshop — testing different approaches to automation and user control.

The team created and used multiple low-fidelity prototypes, role-playing real travel scenarios to explore different approaches to automation, user control and AI feedback. This helped us quickly surface trust and usability questions, such as when automatic translation becomes intrusive and how much control users need in ambiguous contexts.

The workshop allowed us to discard impractical ideas early and identify promising interaction patterns. These insights directly informed later design decisions, including the split between auto and manual modes and the introduction of visible alternative translations.

Four iterations to the final UI.

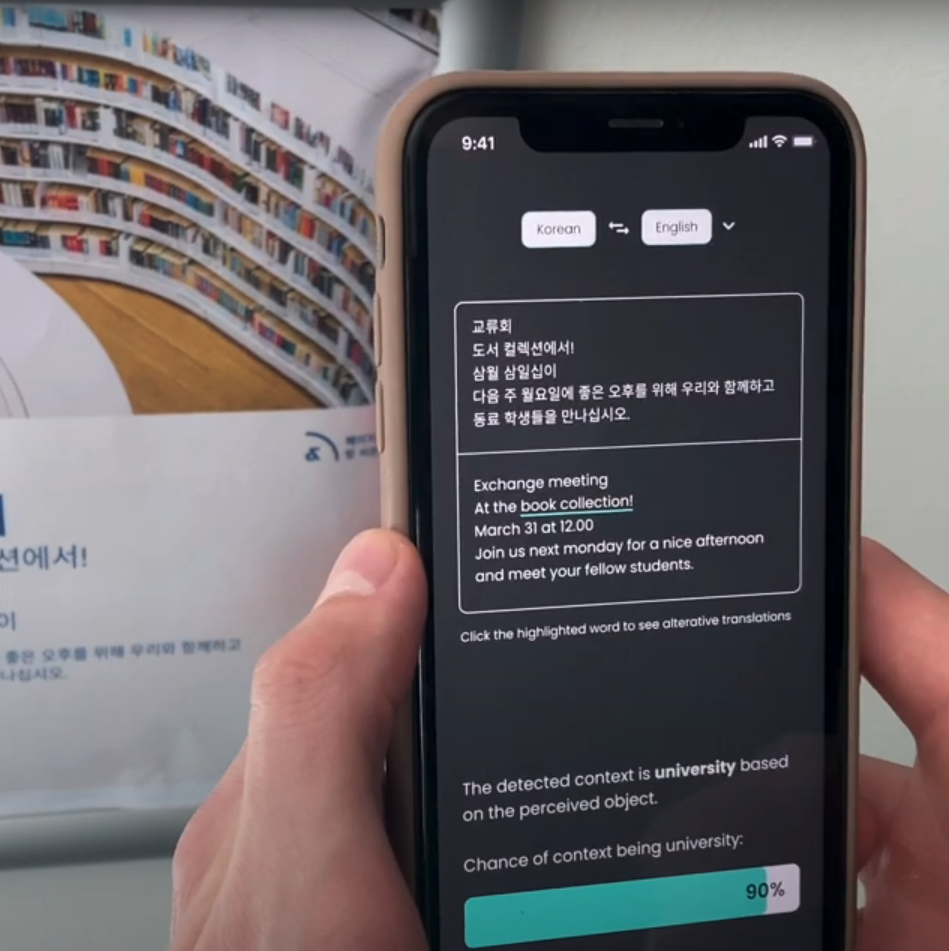

I was directly involved in building and testing the prototype, translating interaction logic into a usable interface. It took four iterations to arrive at the final UI. Each iteration was tested with at least two end users to identify design and interaction issues early.

From Uizard to Figma — wireframes and first prototype, Lo-Fi to Mid-Fi.

Testing showed improved perceived trust and clarity compared with existing translation apps, particularly due to visible alternatives and the ability to intervene without breaking the flow. Through think-aloud usability sessions, we evaluated readability of contextual overlays, discoverability of alternative translations, and user confidence when interacting with uncertain AI output.

Usability testing sessions — evaluating trust and clarity with real users.

Two modes, one coherent experience.

I led ideation and prioritisation sessions to define how contextual AI should behave in practice. Instead of a single interaction flow, I proposed two complementary modes, a deliberate product decision balancing convenience with control.

Efficient and uninterrupted for everyday, low-risk scenarios. Users remain in flow without needing to interact.

Precision and control for high-stakes situations. Users select what to translate and see contextual alternatives.

To address ethical and trust concerns, I introduced a user-autonomy reporting system that allows users to flag incorrect or offensive translations and block content. This feature was not added as an afterthought, but as a core interaction that acknowledges the limits of AI output.

The reporting system, a core ethical interaction, not an afterthought.

Early evaluation results

75%

Improved trust in translation

85%

Clarity vs existing tools

7

Participants — directional, not definitive

* Figures based on early evaluation with a small sample of seven participants and should be treated as directional. Wider validation with a larger user base remains the logical next step.

Reflection & Project Potential

The work strengthened my ability to bridge user needs, emerging technologies and responsible design. In a client or production context, the next step would be to validate the system with a broader user base and collaborate closely with engineers to implement a functional proof of concept.

ContextLens has clear product potential beyond travel. Its context-aware interaction model and user-control mechanisms make it applicable to high-trust domains such as public services, education and accessibility. With further validation and technical implementation, the concept could scale as a standalone product or as an AI-driven translation layer within existing platforms.